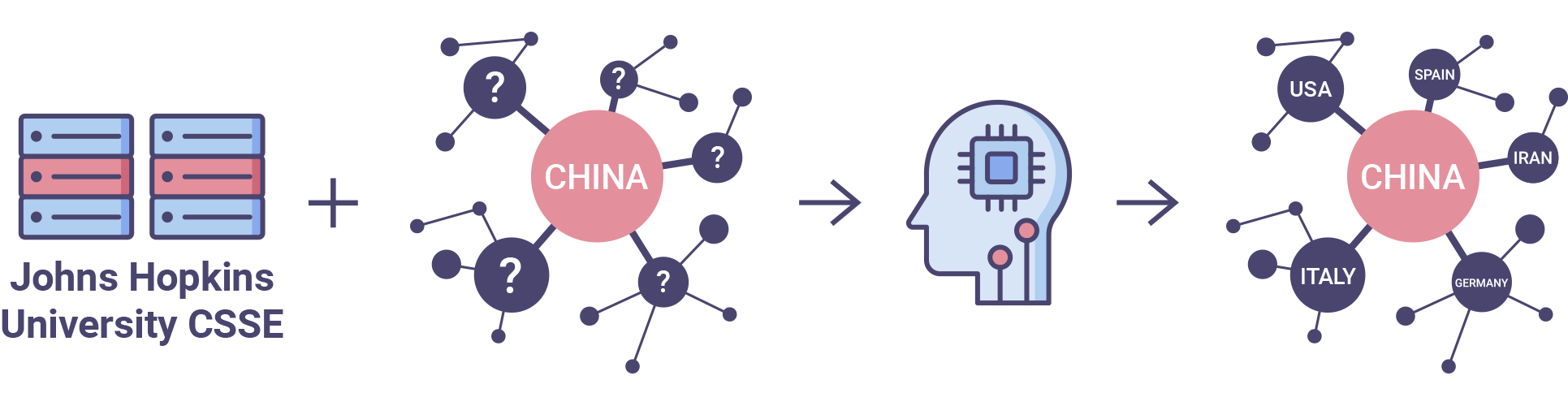

Forecast the global spread of COVID-19

Use any data you can find to predict the future increase in the number of reported cases of COVID-19

The participants need to build an algorithm for the most accurate prediction of the number of reported cases of COVID-19 dynamics for the next seven days.

The competition aims at drawing attention to the pandemic forecasts building. It may probably help to find problems in the data sources or build a useful forecast using the most reliable data.

The competition channel in Slack ODS: #proj_covid

Description of the competition in Russian

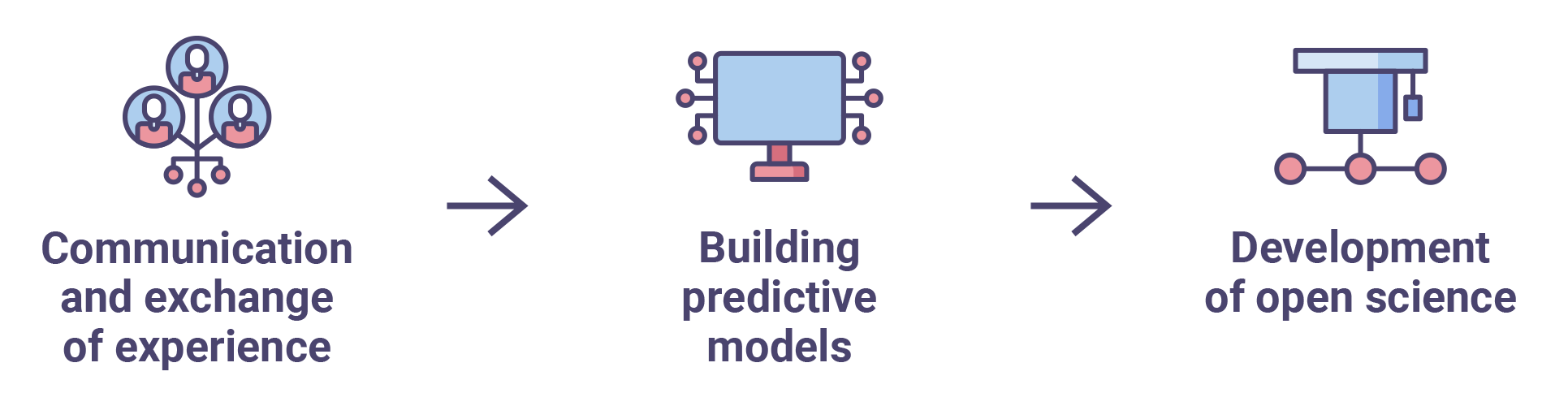

The main goal of the contest is open science development support, forecasting techniques development, and sharing experience in building predictive models. The tasks like that organized as public benchmarking allow scientists, researchers, and engineers to test and compare different approaches and together develop the best practices, at the same time making them available to the entire research community.

The next goal of the contest is to draw attention to the problem of pandemic surveillance and qualitative modeling of its spreading worldwide. The development of open qualitative predictive models that allow assessment of the immediate dynamics of epidemics supports future quick decision-making and effective responses aimed to prevent the next pandemic spread.

Contest rules RU

Contest rules EN

Processing of Personal Data Sberbank RU

Prizes

The winners are determined in three stages:

- Stage 1: for the week 13.04 - 19.04, the winners will be determined on Tuesday 21.04 (solutions sending deadline is 12.04, 11:59 p.m., GMT +3 (Moscow)).

- Stage 2: for the week 20.04 - 26.04, the winners are determined on Tuesday 28.04 (solutions sending deadline is 19.04, 11:59 p.m., GMT +3 (Moscow)).

In the second stage of the competition, the results will be announced both according to country forecasts and for Russian regions.

Second stage prizes will be given to those who make the best forecasts for Russian regions.

- Stage 3: for the week 27.04 - 03.05 winners are determined on Tuesday 05.05 (solutions sending deadline is 26.04, 11:59 p.m., GMT +3 (Moscow)).

In the third (final) stage of the competition, the results will be announced both according to country forecasts and for Russian regions.

This stage prizes will be given to those who make the best forecasts for Russian regions.

FAQ

What period is forecasted?

The forecast is produced for each day until the end of the competition. The intermediate results are given for one week. It may seem like a too-short planning horizon for such a long-distance problem. However, the epidemic’s dynamic is strongly influenced by the quarantine measures taken in each country. It is not possible to predict the exact dates of their implementation as well as their effectiveness.

Moreover, as of the contest starting date, there is no complete data on all the measures implemented, and the impact of the implemented measures has yet to be assessed.

Why predict if there is no confidence in the data validity?

Considering the contest task limitations, the algorithms and solutions developed in its course can still be useful, especially given the increase in data reliability. The competition is, first of all, a public benchmark of predictive models for COVID-19 epidemic data. The participants should focus primarily on modeling rather than on engineering aspects and limitations of systems and solutions, just like their models had to be immediately used in the real environment.

How were the evaluation metrics chosen?

Task features cause the choice of metrics. First, the number of infected people grows exponentially. Second, the general development of the epidemic dynamics in any of the countries is more important than focusing on predicting the exact number of infected in most-pandemic countries. And third, different countries have different standards and approaches to COVID-19 testing, as well as different stages of the epidemic, so the problem needs a sustainable path.

Please note that the metrics may change during the contest. The final version will be announced no later than a week before the end of the competition.

The organizers are waiting for suggestions for improvement from the Open Data Science community.

Our website uses cookies, including web analytics services. By using the website, you consent to the processing of personal data using cookies. You can find out more about the processing of personal data in the Privacy policy